By 2026, 40% of enterprise applications will include task-specific AI agents up from less than 5% just twelve months ago, according to Gartner. At the same time, the same research firm warns that more than 40% of agentic AI projects will be cancelled by the end of 2027, killed by escalating costs, unclear business value, and governance failures.

That gap between the opportunity and the outcome is not a technology problem. It is an architecture problem.

Most enterprises entering this space are building on the wrong foundation. They are wrapping autonomous agents around legacy workflows, choosing frameworks based on vendor marketing rather than technical fit, and deploying production systems without the orchestration and governance layers that separate pilots from platforms. The result is what Gartner has called “agent washing” AI deployments that sound agentic but behave like expensive automation scripts.

Agentic AI architecture is the structural design discipline that prevents this outcome. It defines how AI agents perceive their environment, plan and execute multi-step tasks, coordinate with other agents, access tools and data, and operate within governance boundaries all without continuous human intervention. Getting this architecture right is the difference between an enterprise AI system that delivers 171% average ROI (the figure organizations currently project from mature agentic deployments, according to Onereach.ai’s 2025 enterprise survey) and one that joins the 40% of canceled projects.

This guide covers the architecture end to end what it is, why it matters in 2026, how it works at a component level, what it costs, where it breaks, and how enterprises that have gotten it right actually built it.

In This Guide

- What Is Agentic AI Architecture?

- Why Enterprises Need Agentic AI Architecture Now

- Core Components of Agentic AI Architecture

- Agentic AI Architecture Patterns and Design Models

- How to Build Agentic AI Architecture: Step-by-Step

- Agentic AI Architecture Costs and Implementation Timeline

- Common Challenges and How to Overcome Them

- Agentic AI Architecture Use Cases by Industry

- How PrimeFirms Builds Agentic AI Architecture for Enterprise

- Frequently Asked Questions About Agentic AI Architecture?

What Is Agentic AI Architecture?

Agentic AI architecture is the structural design framework that enables AI agents to plan, reason, and execute complex tasks autonomously within enterprise environments. It comprises interconnected layers including reasoning engines, memory systems, orchestration layers, and tool integration frameworks that allow one or more AI agents to coordinate and achieve business goals without continuous human oversight.

This definition matters because it draws a hard line between what agentic AI architecture is and what it is often confused with. A chatbot that retrieves data and formats a response is not agentic. An RPA bot that executes predefined scripts is not agentic. An AI assistant that helps an employee draft an email is not agentic. Agentic systems pursue goals across multiple steps, manage their own reasoning loops, use external tools when needed, and adapt their behavior based on the outcomes they observe.

The architecture is what makes this autonomous behavior reliable, observable, and safe at enterprise scale.

Unlike traditional software architecture which defines data flows between static services agentic AI architecture must account for systems that reason, plan, and make decisions dynamically. This introduces design challenges that conventional architectural patterns do not address: how does an agent maintain state across a multi-hour workflow? How does an orchestration layer coordinate ten specialized agents without creating race conditions? How does a governance layer intercept an agent before it takes an irreversible action? Agentic AI architecture answers these questions before the system is built, not after it fails in production.

Agentic AI vs. Traditional AI Systems

The distinction is not subtle. Traditional AI systems operate in a request-response model: input comes in, the model processes it, output goes out, the cycle ends. The human orchestrates every step. Traditional systems are reactive by design.

Agentic systems are goal-directed. A human (or a higher-level orchestrator) defines an objective “research these 50 vendors, score them against our criteria, draft a shortlist with justifications, and schedule review calls with the top five.” The agentic system then plans the steps required, executes each step, evaluates the result, adjusts if something goes wrong, and drives toward the objective without waiting for instruction at each stage. The human checks the final output, not every intermediate step.

That shift from orchestration by humans to goal-directed autonomy by AI is what makes the architectural layer beneath it so consequential.

Why Enterprises Need Agentic AI Architecture Now

The timing argument for agentic AI architecture in 2026 is not about trend-following. It is about competitive positioning in a window that is closing faster than most executive teams realize.

Gartner’s data from August 2025 is unambiguous: 40% of enterprise applications will embed task-specific AI agents by the end of 2026. Organisations that have not established their agentic AI architecture by mid-2026 will be entering a market where their competitors have 12–18 months of production learning, agent performance data, and institutional knowledge that cannot be replicated quickly. This is not the same as being late to adopt cloud infrastructure the operational advantage of early, well-built agentic systems compounds over time because agents improve as they accumulate operational experience.

Beyond competition, there is a cost-structure argument. McKinsey’s research on agentic AI shows that organizations deploying autonomous systems in high-volume repetitive workflows claims processing, contract review, customer onboarding, incident response are achieving 4–7x productivity multipliers in those specific processes. The arithmetic is straightforward: a process that requires eight hours of skilled labor can be executed by a well-designed agentic system in minutes, with human review reserved for the cases the system flags as requiring judgment. At enterprise scale, that translates to measurable EBITDA improvement, not marginal efficiency gains.

The regulatory environment adds urgency. The EU AI Act entered full enforcement in August 2026, establishing risk-based classification requirements, transparency mandates, and audit obligations for AI systems operating in high-risk categories. Enterprises building agentic AI architecture now have the opportunity to embed compliance requirements into the design governance layers, audit trails, explainability hooks. Enterprises that delay building this architecture will face the far more expensive task of retrofitting compliance into systems already in production.

Finally, there is the infrastructure maturity argument. The frameworks, protocols, and tooling required to build production-grade agentic AI architecture reached operational maturity in 2025. The Model Context Protocol (MCP), established by Anthropic and adopted widely throughout 2025, standardizes how agents connect to external tools, databases, and APIs eliminating the custom integration work that made early agentic systems brittle. LangGraph, AutoGen, and CrewAI have each released enterprise-grade versions with stateful orchestration, error recovery, and observability built in. The conditions for building agentic systems that actually work in production have never been better.

The risk of waiting is not falling behind on a technology trend. It is spending 2027 and 2028 building what competitors already have running.

Core Components of Agentic AI Architecture

Production-grade agentic AI architecture is built from five interconnected components. Each serves a distinct function. Each has specific design requirements. The failure of any one component undermines the entire system which is why Gartner’s 40% project failure rate traces back, in most cases, to architectural gaps rather than model limitations.

Need help designing this architecture for your specific environment?

The Reasoning Engine

The reasoning engine is the cognitive core of the agentic system. It uses a large language model (LLM) to interpret goals, generate plans, evaluate options, and decide what to do next. The choice of LLM and reasoning strategy here is the first architectural decision, and it carries the most downstream consequences.

In 2026, the leading models for enterprise agentic reasoning are Claude Opus (optimized for complex multi-step planning, achieving 80.9% on SWE-bench Verified), GPT-5, and Gemini 3 Pro (which leads on multimodal tasks requiring vision and audio). The model selection is not about brand preference it is about matching reasoning capability to the specific task profile the agent will handle. An agent that needs to review legal documents and flag compliance issues has different reasoning requirements than one that monitors infrastructure and coordinates incident response.

The reasoning strategy is equally consequential. ReAct (Reasoning + Acting) remains the dominant pattern for enterprise agents because it alternates between reasoning steps and action steps, allowing the agent to observe outcomes before deciding next actions. Chain-of-Thought reasoning improves accuracy on complex planning tasks by forcing the model to externalize its logic. In high-stakes workflows, enterprises are now implementing reflection loops steps where the agent evaluates its own output before acting on it which significantly reduces error rates in production.

Memory and State Management

This is the component most often underestimated in early agentic implementations and most often responsible for production failures. Agents that cannot remember what they did, what they observed, and what they decided cannot execute multi-step workflows reliably.

Enterprise agentic architecture requires two distinct memory layers. Short-term (in-context) memory holds the active context of the current task the goal, the steps taken so far, the current state, the last tool output. This lives in the model’s context window and resets when the task ends. Long-term (persistent) memory holds information across sessions and across agents customer history, prior decisions, learned patterns, organizational knowledge. This requires a vector database (Pinecone, Weaviate, Chroma) or a graph database, and it must be managed as carefully as any enterprise data asset.

The architectural failure mode here is treating memory as an afterthought. Systems that rely entirely on the model’s context window break when tasks exceed context limits, when agents need to share information with other agents, or when a multi-day workflow is interrupted and must resume from a known state. Long-term memory architecture must be designed upfront, not bolted on after the first production incident.

Tool Integration Layer

Agents without tools can reason but cannot act. The tool integration layer is what connects the agent’s reasoning to real-world execution API calls, database queries, code execution, file operations, web browsing, communication systems, and enterprise application integrations.

The Model Context Protocol (MCP), which reached broad enterprise adoption in 2025, fundamentally changed how this layer is built. Before MCP, each tool integration required a custom connector a brittle, maintenance-heavy approach that created real-world fragility in production agentic systems. MCP provides a universal interface standard, comparable to USB-C for hardware, that allows any MCP-compatible tool to be connected to any MCP-compatible agent with a single implementation. Organizations building tool integration layers on MCP in 2026 are eliminating the integration overhead that made early agentic systems expensive to maintain.

Tool authorization is the governance consideration in this layer. Agents must have the minimum permissions necessary to execute their assigned tasks never broad system access. Every tool call should be logged, and high-impact tool calls (those that modify data, send communications, or make financial transactions) should require either human approval or confirmation from a guardian agent before execution.

Orchestration Layer

In single-agent systems, the reasoning engine manages its own planning loop the agent decides what to do, does it, observes the result, and continues. In multi-agent systems (which represent 66.4% of enterprise agentic deployments, according to Landbase’s 2025 analysis), this self-management is insufficient. The orchestration layer is the control plane that coordinates multiple agents, manages task delegation, handles error recovery, and ensures that agent collaboration produces coherent outcomes rather than conflicting actions.

Orchestration architectures in 2026 follow two primary patterns. Hierarchical orchestration places a “supervisor” agent above specialized worker agents the supervisor decomposes goals, delegates tasks to the appropriate specialists, and synthesizes results. This pattern mirrors how human teams work and is the most intuitive for enterprise use cases. LangGraph and CrewAI both implement hierarchical orchestration natively. Decentralized orchestration allows agents to communicate directly via peer-to-peer protocols (Google’s Agent-to-Agent protocol, A2A, defines this communication standard), with coordination emerging from the agents’ interactions rather than being imposed top-down. This pattern is more resilient to individual agent failures but is harder to reason about and debug.

Gartner recorded a 1,445% surge in enterprise inquiries about multi-agent systems from Q1 2024 to Q2 2025. The orchestration design decision is the one most consequential for whether those systems actually work.

Governance and Safety Layer

This is the layer that Gartner identifies as the primary failure point in the 40% of agentic AI projects that are canceled. It is also the layer most often skipped in early implementations because it generates no visible output and slows time-to-demo.

The governance layer establishes what agents are allowed to do, enforces operational boundaries, maintains audit logs of every agent action and decision, and intercepts potentially harmful or out-of-scope actions before they execute. In regulated industries healthcare, financial services, legal this layer is not optional. In any enterprise where agentic systems have access to customer data, financial systems, or external communications, this layer determines whether the system can be trusted enough to run in production.

In 2026, the pattern that enterprise architects are converging on is the “guardian agent” model: a dedicated agent whose sole purpose is to monitor the actions of other agents, flag policy violations, and escalate to human oversight when the primary agents reach decisions outside their defined operational boundaries. Guardian agents are projected to represent 10–15% of the total agentic AI market by 2030, according to Gartner a measure of how central the governance function has become to enterprise deployment.

Agentic AI Architecture Patterns and Design Models

The five components described above combine into architectural patterns that address different enterprise use cases and risk profiles. Choosing the right pattern is an architectural decision, not a technology preference.

Single-Agent with Tool Use

The simplest production-ready pattern. One agent, one reasoning loop, multiple tools. The agent receives a goal, plans the steps, calls tools as needed, and delivers an output. Suitable for: focused, well-defined tasks with predictable inputs (document review, data extraction, report generation, customer inquiry routing). Not suitable for: tasks that require parallel execution, specialized domain expertise across multiple fields, or workflows that run for hours to days.

This pattern is the correct starting point for most enterprise agentic implementations. It is the least complex, the fastest to deploy, and the easiest to observe and debug. Organizations that start with a multi-agent system without having mastered single-agent deployment consistently fail.

Supervisor-Worker Multi-Agent Architecture

The most widely deployed enterprise pattern in 2026. A supervisor agent receives the high-level goal, decomposes it into subtasks, delegates each subtask to a specialized worker agent, monitors progress, handles errors, and synthesizes the results. Worker agents are domain-specialists a research agent, a writing agent, a compliance verification agent, a data analysis agent each optimized for its specific function.

This pattern enables parallelization (worker agents execute simultaneously where tasks are independent), specialization (each agent uses the best model and reasoning strategy for its function), and modularity (new capabilities can be added by adding new worker agents without restructuring the system). It is the pattern behind the enterprise AI systems currently delivering the documented 4–7x productivity multipliers in complex knowledge workflows.

Hierarchical Agentic Ecosystem

The advanced pattern for enterprises deploying AI at organizational scale. Multiple supervisor-worker clusters, each responsible for a business domain (finance, operations, customer success, HR), connected by an enterprise orchestration layer that enables cross-domain agent collaboration. This pattern is where Gartner’s projection of 30% of enterprise application software revenue by 2035 is grounded. The hierarchical ecosystem is the architecture of the AI-native enterprise, not just the AI-augmented one.

Building this pattern requires significant data infrastructure investment (the shared memory and context layer that connects agents across domains), robust governance architecture, and an organizational change management program that redefines human roles in the agent-assisted operating model.

Event-Driven Agentic Architecture

Agents that respond to events rather than explicit invocations. When a specific condition is met a financial threshold is crossed, a customer sentiment score drops below a threshold, an infrastructure metric indicates an anomaly. The event triggers an agent to investigate, act, and report. This pattern is particularly powerful for monitoring and incident response use cases where the latency of human detection is the limiting factor in response quality.

How to Build Agentic AI Architecture: Step-by-Step

The enterprises that successfully move from agentic AI pilot to production do not start with the most ambitious use case. They start with the right one and they build the architectural foundation before they build the application.

Step 1: Define the Use Case with Architectural Fitness Criteria

Not every workflow benefits from agentic AI. The workflows that deliver the highest ROI from agentic implementation share specific characteristics: they are multi-step, they require some degree of judgment (not pure rule execution), they currently consume significant skilled human time, and their inputs and success criteria can be clearly defined. Before selecting a use case, evaluate it against these criteria. The worst architectural decision in agentic AI is starting with the wrong problem.

Step 2: Audit Your Data and Tool Infrastructure

Agentic systems that cannot access high-quality, real-time enterprise data cannot make good decisions. Before designing the agent architecture, conduct a data readiness audit: What data do agents need to access? Where does it live? How fresh is it? What integration points exist (APIs, databases, enterprise applications)? What access controls are in place? Data infrastructure gaps are the most common cause of agentic systems performing well in testing and failing in production where data freshness, access latency, and permission models all behave differently than in sandboxed environments.

Step 3: Design the Memory Architecture

Before writing a single line of agent code, document the memory requirements for your target use case. Identify: what information must persist across sessions, what information must be shared across agents, what the freshness requirements are for retrieved context, and what the privacy/compliance requirements are for stored agent memory. Select vector database infrastructure (Pinecone, Weaviate, or Chroma are the most production-battle-tested options in 2026) and define the data schemas that will store agent state.

Step 4: Select Framework and LLM Stack

Framework selection is the architectural decision with the longest-lasting operational consequences. The leading enterprise frameworks in 2026 are LangGraph (best for stateful, graph-based workflows with complex branching logic), AutoGen (best for conversational multi-agent patterns and rapid prototyping), CrewAI Enterprise (best for role-based agent teams with human-in-the-loop workflows), and Semantic Kernel (best for enterprises already on the Microsoft Azure stack requiring deep Office 365 integration). The wrong framework choice is recoverable but expensive teams typically spend 6–10 weeks migrating between frameworks when they discover fundamental capability mismatches with their use case in production.

Step 5: Build and Test the Single-Agent Prototype

Start with one agent, one tool, one clearly defined task. Get this to production quality with error handling, logging, and observable behavior before adding complexity. The discipline of mastering single-agent production deployment teaches the team the debugging, observability, and failure-recovery patterns they will need at scale. Organizations that skip this step and build multi-agent systems immediately consistently underestimate the debugging complexity of emergent multi-agent behavior.

Step 6: Add Orchestration and Multi-Agent Coordination

Once the single-agent foundation is production-ready, add the orchestration layer. Define agent roles, communication protocols (MCP for tool access, A2A or framework-native protocols for agent-to-agent communication), and error recovery logic. Test agent coordination under failure conditions what happens when a worker agent returns an unexpected result? What happens when a tool call fails after three retries? The orchestration layer’s resilience to failure determines the system’s production reliability.

Step 7: Implement Governance Before Scaling

The governance layer must be operational before the system handles real business data or makes decisions with real consequences. Define agent permission boundaries (what tools each agent can access, what data it can read and write, what actions require human approval), implement audit logging for all agent actions and decisions, and establish the escalation protocols for out-of-scope or ambiguous situations. Build the guardian agent that monitors production agent behavior and flags anomalies. This step is not glamorous but skipping it is the fastest path to becoming one of Gartner’s 40% canceled projects.

Step 8: Deploy, Monitor, and Iterate

Production deployment of agentic systems requires observability infrastructure that most enterprise teams have not built for AI workloads. Implement distributed tracing across agent interactions (LangSmith, Weights & Biases, and Arize AI are the leading observability platforms for agentic systems in 2026), define performance SLAs (latency, task completion rate, escalation rate, error rate), and establish a feedback loop that incorporates production observations into agent improvement cycles. Agentic systems improve with operational experience but only if the observability infrastructure captures the right data.

Agentic AI Architecture Costs and Implementation Timeline

Cost transparency is where most enterprise AI vendor content fails either by providing ranges so broad they are meaningless or by omitting the infrastructure costs that dominate total project spend. The figures below are based on actual enterprise implementation patterns, with the variables that drive cost variation made explicit.

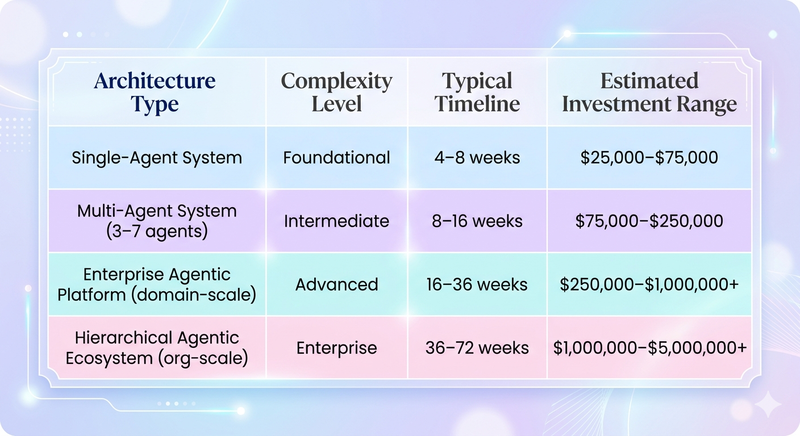

Ranges reflect variation by industry, data infrastructure maturity, integration complexity, governance requirements, and team composition. Healthcare and financial services implementations carry 20–35% compliance cost premiums. Organizations with mature data infrastructure and existing API layers typically land in the lower third of each range.

What drives cost up:

Integrating agentic systems with legacy enterprise applications (SAP, Salesforce, Oracle, ServiceNow) without modern API layers is the single largest unexpected cost driver in enterprise agentic implementations. Organizations that discover mid-project that their core systems require custom connectors rather than MCP-compatible integrations typically see 40–60% cost overruns on the integration component alone.

AI infrastructure costs LLM API calls, vector database storage, and compute for long-running orchestration workflows are a recurring operational expense that must be modeled before project approval. A multi-agent system executing 10,000 complex tasks per day with GPT-5 or Claude Opus incurs inference costs of $15,000–$40,000 per month at current pricing. This is the cost category most often missing from initial business cases and most often responsible for post-launch ROI disappointment.

What drives cost down:

Organizations that invest in thorough use case selection (Step 1 above) consistently realize 30–50% lower total project costs because they avoid mid-project scope changes. Using MCP-compatible tool ecosystems rather than custom integrations reduces integration cost by an estimated 40–60% versus bespoke approaches. Starting with a single-agent prototype before scaling to multi-agent architecture accelerates the learning curve and reduces rework in later phases.

The IDC projection that year-over-year AI spending will grow by 31.9% between 2025 and 2029 reflects, in part, enterprises absorbing the infrastructure costs they initially underestimated. Organizations that model these costs accurately upfront are the ones achieving the 171% average ROI figure because their business case was built on realistic assumptions, not optimistic ones.

Common Challenges and How to Overcome Them

The honest picture of enterprise agentic AI implementation includes the failure modes. The 40% project cancellation rate Gartner projects is not an industry anomaly. It reflects real architectural, organizational, and strategic mistakes that are consistent enough to be predictable.

Challenge 1: Legacy System Integration

Traditional enterprise systems were not designed for agentic interactions. Most large ERP and CRM platforms expose limited, inconsistent APIs, require synchronous request-response patterns incompatible with long-running agentic workflows, and have data models that agents cannot interpret without significant pre-processing. Gartner estimates that legacy integration complexity is the primary technical driver of the 40% failure rate systems that cannot support real-time execution, modern API contracts, or secure identity management for agents cannot be extended with agentic capabilities without foundational changes.

The solution: Conduct a system integration audit before project approval. Map every data source and system the agent will need to access. Identify the integration pattern available for each (native API, MCP connector, database direct access, or requires custom development). Build integration complexity into the cost and timeline model. For organizations where legacy system modernization is not feasible in the project timeline, consider a data layer approach a modern API layer deployed in front of legacy systems that agents interact with, rather than the legacy systems directly.

Challenge 2: Agent Hallucination and Reliability

Agents that make confident errors are more dangerous than agents that admit uncertainty. In production enterprise environments where agent decisions trigger real emails, financial transactions, system changes, and customer interactions an agent that confidently executes a wrong plan causes real business harm. The hallucination problem in LLMs is reduced by architecture, not eliminated.

The solution: Implement reflection loops where agents evaluate their own output before acting. Use structured output schemas (agents return decisions in defined formats that validation logic can check before execution). Implement confidence thresholds actions below a defined confidence level route to human review rather than auto-executing. Build tool call verification before executing a high-impact action, the agent confirms the action with a brief restatement of what it is about to do and why. These patterns add latency but reduce the production error rate that, left unchecked, destroys organizational trust in agentic systems faster than any technical failure.

Challenge 3: Memory and Context Degradation

Long-running agentic workflows accumulate context that exceeds model context windows, causing the agent to lose track of earlier decisions, repeat steps it already completed, or generate contradictory actions. This is a particular failure mode in workflows that run for hours-to-days multi-document research tasks, complex procurement negotiations, extended customer service cases.

The solution: Architect persistent memory from day one (Step 3 of the implementation process). Use summarization agents that periodically compress accumulated context into structured memory entries stored in the vector database. Implement workflow checkpointing structured state saves at defined intervals that allow interrupted workflows to resume from a known state rather than from scratch. The teams that design memory architecture before they design the agent logic are the teams that avoid this failure mode entirely.

Challenge 4: Multi-Agent Coordination Failures

When multiple agents work on interdependent tasks, coordination errors duplicate work, contradictory outputs, race conditions where two agents modify the same resource simultaneously create outputs that are worse than what a single, simpler agent would have produced. The complexity premium of multi-agent systems only pays off when the orchestration architecture manages these interactions correctly.

The solution: Define explicit agent communication contracts before building.What information each agent sends, what information each agent expects, in what format, on what trigger. Implement idempotent tool calls (calling the same tool twice with the same parameters produces the same result, without unintended side effects). Use LangGraph’s state management or CrewAI’s task dependency framework to enforce execution order where task sequencing matters. Treat multi-agent system design as distributed systems engineering, because that is what it is.

Challenge 5: Governance and Compliance Gaps

Enterprises in regulated industries – healthcare, financial services, insurance, legal that deploy agentic systems without purpose-built governance architecture face regulatory exposure that can dwarf the value the system generates. The EU AI Act’s enforcement, the SEC’s guidance on AI in financial decision-making, and HIPAA’s requirements for AI systems handling protected health information all create specific obligations that cannot be addressed retroactively in a live production system.

The solution: Engage legal and compliance stakeholders in the architecture design phase, not the pre-launch review phase. Build audit logging into the orchestration layer. Every agent decision, every tool call, and every inter-agent communication should be logged with sufficient context to reconstruct the agent’s reasoning. Implement human-in-the-loop checkpoints for decisions that cross risk thresholds defined by the compliance team. Design the governance layer to generate the reports your auditors will ask for before they ask.

Agentic AI Architecture Use Cases by Industry

The abstract promise of agentic AI becomes concrete when you see where it is already working at production scale in 2026.

Financial Services

Investment banks and insurance carriers are deploying agentic AI in three high-value areas: credit underwriting (agents that gather financial data from multiple sources, run risk models, generate underwriting summaries, and flag exceptions for human review cutting a process that previously took 4–6 hours to under 30 minutes); regulatory compliance monitoring (agents that continuously monitor transaction flows against evolving regulatory requirements and generate exception reports); and claims processing (agents that gather documentation, assess coverage eligibility, calculate settlement ranges, and coordinate with counterparties). According to Accenture, AI applications in financial services could generate $170 billion in annual value by 2028.Agentic systems executing complex, judgment-required workflows are where the majority of that value concentrates.

Healthcare

Healthcare organizations are using agentic architecture primarily in clinical operations and revenue cycle management. Clinical documentation agents capture patient encounter data, draft clinical notes, flag potential drug interactions, and route documentation for physician review,Freeing physicians from the administrative burden that currently accounts for 35% of their working time (American Medical Association, 2025). Revenue cycle agents coordinate eligibility verification, prior authorization, claims submission, and denial management across payer systems that vary in their API quality and data models. Accenture projects AI applications in healthcare generating up to $150 billion in annual savings for the industry by 2026.

Logistics and Supply Chain

Supply chain visibility has been a persistent challenge because the data required to manage it is distributed across dozens of carrier, customs, warehouse, and ERP systems. Agentic systems in logistics use event-driven architecture (a shipment delay event triggers an agent to assess downstream impact, identify alternative routing options, notify affected stakeholders, and update delivery estimates across customer-facing systems) to replace the multi-system manual coordination that currently makes supply chain exception management a labor-intensive, slow process. AWS customers at scale have documented agents replacing repetitive RPA tasks in logistics workflows with systems that handle exception logic the RPA bots could never manage.

Enterprise IT and DevOps

By 2029, Gartner projects 70% of enterprises will deploy agentic AI as part of IT infrastructure operations. The most immediate production deployments in 2026 are in incident response: agents that monitor system metrics, detect anomalies, correlate events across distributed systems, generate root cause hypotheses, implement standard remediation playbooks, and escalate to human engineers with full diagnostic context when automated remediation fails. Mean time to resolution (MTTR) improvements of 60–80% have been documented in production deployments of well-architected agentic incident response systems.

Legal and Professional Services

Contract analysis, due diligence, and regulatory research – all high-value, high-volume activities in large professional services organizations are where agentic AI is delivering the most immediate ROI in this sector. Contract review agents that work through a 500-document M&A data room, extracting defined clause types, flagging risk provisions, and generating a structured risk summary, can reduce senior associate time on first-pass review by 70–80%. The architectural requirement here is rigorous: hallucination rates that are acceptable in a customer-facing chatbot are not acceptable in a legal document review context, making the reflection loops and structured output validation patterns described earlier non-negotiable.

How PrimeFirms Builds Agentic AI Architecture for Enterprise

At PrimeFirms, our agentic AI architecture engagements start with a question that most vendors skip: does this use case actually warrant an agentic architecture, or would a simpler system solve the problem with less risk?

That question reflects the hard lesson from watching organizations commit to multi-agent platforms for workflows that a single, well-prompted LLM call could handle. The discipline of right-sizing the architecture to the problem is what separates engagements that deliver on their business case from ones that generate impressive demos and disappointing production metrics.

Our architecture practice covers the full stack: use case validation and architectural fitness assessment, data and integration readiness auditing, memory architecture design and vector database implementation, framework selection and configuration (LangGraph, CrewAI, AutoGen, Semantic Kernel), multi-agent orchestration design and testing, governance layer implementation with audit logging and guardian agent configuration, and production deployment with observability infrastructure.

We have structured our delivery model around the step-by-step process described in this guide because it is the process that consistently produces production-ready systems rather than perpetual pilots. The single-agent prototype before multi-agent scale rule is non-negotiable in our engagements. It is the architectural discipline that, more than any other, predicts whether a project will be in the 60% that succeed or the 40% that do not.

For enterprises ready to move from agentic AI planning to production architecture, PrimeFirms offers a complimentary architecture assessment that covers use case validation, current system integration readiness, and a recommended implementation roadmap.

Frequently Asked Questions About Agentic AI Architecture

What is agentic AI architecture?

Agentic AI architecture is the structural design framework that enables AI systems to act autonomously toward defined goals across multi-step workflows. It defines how AI agents perceive their environment, access memory and tools, reason about decisions, coordinate with other agents, and operate within defined governance boundaries.All without requiring human direction at each step.

The critical distinction from conventional software architecture is that agentic systems must accommodate dynamic, goal-directed behavior rather than predefined logic flows. A traditional system executes the logic its developers specified. An agentic system formulates its own execution plan to achieve a goal, adapts when that plan encounters unexpected conditions, and learns from the outcomes it observes. Designing reliable behavior for systems with this degree of autonomy requires specific architectural components reasoning engines, persistent memory, tool integration layers, orchestration frameworks, and governance controls that conventional architecture patterns do not address.

For enterprises, the practical implication is that agentic AI architecture is not an extension of your existing AI or automation architecture. It is a new architectural discipline with its own design patterns, failure modes, and infrastructure requirements.

How does agentic AI architecture work?

A production agentic AI system operates as a closed-loop system. The agent receives an objective, retrieves relevant context from its memory layer, formulates a plan using its reasoning engine, executes the first step by calling appropriate tools through the tool integration layer, observes the result, updates its internal state, and continues the loop until the objective is achieved or an escalation condition is met.

In multi-agent systems, an orchestration layer manages this loop across multiple specialized agents. A supervisor agent decomposes the high-level objective into subtasks, delegates each subtask to the appropriate specialist agent, monitors progress, handles inter-agent dependencies, and synthesizes the final output. The governance layer operates in parallel throughout, monitoring every agent action, logging decisions, and intercepting actions that exceed defined operational boundaries.

The system’s reliability depends on the quality of every component in this loop. A weak reasoning engine makes poor plans. Inadequate memory causes context degradation in long workflows. Poorly designed tool integration creates brittle connections to enterprise systems. Inadequate governance creates regulatory and operational risk. The architecture must address all five components not just the reasoning layer that receives the most vendor attention.

What are the core components of agentic AI architecture?

Every production-grade agentic AI architecture requires five functional components: a reasoning engine (the LLM and reasoning strategy that formulates plans and makes decisions), a memory system (short-term context management and long-term persistent storage using vector databases), a tool integration layer (the connections to external systems, APIs, and data sources that agents act through), an orchestration layer (the coordination mechanism for single-agent planning loops and multi-agent task delegation), and a governance and safety layer (permission management, audit logging, risk controls, and human escalation protocols).

These components are interdependent. The quality of agent reasoning is limited by the quality of the context the memory system provides. The value of tool access is limited by the governance constraints that define what agents can do with that access. The scalability of multi-agent systems is determined by the orchestration layer’s ability to coordinate agents without introducing coordination failures. Architects who treat these components as independent features to be added sequentially consistently build systems that fail in production for the same reason: the weakest component limits the entire system’s reliability.

What is the difference between agentic AI and traditional AI systems?

Traditional AI systems are reactive.They process inputs and generate outputs in a single exchange, with humans orchestrating every step of any multi-step workflow. They have no persistent state between interactions, no ability to plan sequences of actions, and no mechanism to use tools or take actions in external systems without explicit invocation. Their intelligence is entirely contained in the model’s response to a single prompt.

Agentic AI systems are goal-directed. They receive an objective and autonomously determine the sequence of steps required to achieve it. They maintain state across those steps remembering what they have done, what they have observed, and what remains. They use tools to take actions with real-world consequences: querying databases, calling APIs, executing code, sending communications. They adapt their plan when outcomes diverge from expectations. In multi-agent systems, they coordinate with other specialized agents to tackle tasks that exceed any individual agent’s scope.

The performance gap between these approaches widens as task complexity increases. For a task requiring one step and one data source, a traditional LLM and an agentic system produce comparable results. For a task requiring 20 steps, four data sources, parallel execution of independent subtasks, and error recovery from mid-process failures, only the agentic architecture can deliver consistent, reliable outcomes at production scale.

How much does it cost to implement agentic AI architecture?

Implementation cost varies substantially based on four primary factors: the complexity of the architecture (single-agent versus multi-agent versus enterprise platform), the state of the existing data and integration infrastructure, the industry’s regulatory requirements, and the team composition (internal build, vendor partnership, or hybrid).

As a planning reference, single-agent systems that automate a well-defined, bounded workflow typically require $25,000–$75,000 and 4–8 weeks. Multi-agent systems coordinating 3–7 specialized agents across a business domain range from $75,000–$250,000 over 8–16 weeks. Enterprise-scale agentic platforms serving an entire business function range from $250,000–$1,000,000+, with implementations running 16–36 weeks.

The cost category most consistently underestimated in enterprise planning is ongoing AI infrastructure.LLM inference costs for production agent workloads at scale can reach $15,000–$40,000 per month, depending on model selection and task volume. Organizations that model total cost of ownership accurately, including recurring infrastructure costs, are the ones that build business cases their CFOs approve and their operations teams can sustain. Underbidding on infrastructure is a primary driver of post-launch project reviews that result in system decommissioning.

What are the biggest challenges in implementing agentic AI architecture?

The five challenges that most frequently derail enterprise agentic AI implementations are: legacy system integration (most enterprise systems were not built to support the real-time API access, modern data contracts, and secure identity management that agentic systems require); agent reliability and hallucination management (agents that make confident errors in production cause real business harm.Architectural controls are required, not just better models); memory and context degradation in long-running workflows (agents that lose track of earlier decisions produce inconsistent or contradictory outputs at scale); multi-agent coordination failures (duplicate work, contradictory outputs, and race conditions when agent communication protocols are poorly designed); and governance and compliance gaps (particularly in regulated industries where the EU AI Act, HIPAA, and financial regulatory frameworks create specific obligations for AI systems making consequential decisions).

The consistent pattern in the 40% of projects that fail is that these challenges were identified during implementation rather than during architecture design. The organizations that successfully deploy agentic AI at scale treat these five areas as design requirements, not risk mitigation items. They audit legacy system integration before project approval. They build memory architecture before agent logic. They implement governance before production deployment. Solving these problems in the right order is as important as solving them at all.

Which industries benefit most from agentic AI architecture?

Every industry with high-volume, multi-step knowledge workflows benefits from agentic AI architecture but the ROI concentration is currently highest in four sectors. Financial services gains the most from agentic AI in credit underwriting, compliance monitoring, and claims processing processes that require judgment, access to multiple data sources, and consistent execution across thousands of cases per day. Healthcare benefits most from clinical documentation automation and revenue cycle management, where the combination of regulatory complexity and administrative burden creates the highest value opportunity for agentic coordination. Logistics and supply chain management gains from event-driven agentic architectures that handle exception management and routing optimization faster than any human coordination model allows. Enterprise IT benefits most from agentic incident response systems that reduce mean time to resolution by 60–80% versus traditional human-and-script-based approaches.

What these sectors have in common is not their industry. It is their workflow profile: high-volume, multi-step, time-sensitive, requiring access to multiple data sources, and currently executed by skilled professionals who would be better utilized on higher-judgment work. Any enterprise workflow that fits that profile is a candidate for agentic architecture, regardless of industry.

What is multi-agent orchestration in agentic AI?

Multi-agent orchestration is the coordination mechanism that enables multiple specialized AI agents to work together toward shared objectives without duplicating effort, producing conflicting outputs, or creating coordination failures that undermine the system’s reliability.

In a well-designed multi-agent system, a supervisor agent receives the high-level objective, decomposes it into subtasks appropriate for each specialist agent, assigns tasks with clear inputs and success criteria, monitors execution, manages dependencies between tasks whose outputs feed other tasks’ inputs, handles errors when individual agents fail, and synthesizes the final output from individual agent contributions. Worker agents – a research specialist, a data analyst, a communications agent, a compliance checker execute their assigned tasks with access to the tools and data their role requires.

The orchestration architecture determines whether this coordination produces outcomes better than any individual agent could achieve (the goal) or outcomes worse than a single simpler agent (the common failure mode). LangGraph’s graph-based state management, CrewAI’s role-based task framework, and Microsoft AutoGen’s conversational multi-agent patterns each address orchestration differently framework selection must match the coordination complexity of the specific use case.

How long does it take to implement agentic AI architecture in an enterprise?

Implementation timeline depends on the architecture’s scope and the organization’s readiness. A single-agent system implementing one well-defined workflow can reach production in 4–8 weeks when the data infrastructure and integration environment are already mature. A multi-agent system coordinating multiple specialized agents across a business domain typically requires 8–16 weeks for a team with agentic AI architecture experience, with the variation driven primarily by integration complexity.

Enterprise-scale agentic platforms systems serving an entire business function with sophisticated orchestration, governance, and observability. Realistically require 16–36 weeks. This timeline assumes no significant legacy system modernization is required. When legacy integration work is part of the project scope, add 8–16 weeks for that component alone.

The single most reliable predictor of timeline is the quality of architecture and use case design at the project’s start. Organizations that invest 2–4 weeks in architecture design before writing any code consistently deliver in less total time than those that start building immediately and discover architectural issues during development. The governance layer often deprioritized as non-functional work is the component most likely to add unexpected time when it is addressed late rather than designed in from the start.

How do enterprises govern and secure agentic AI systems?

Enterprise governance for agentic AI systems operates at three levels: permission governance (defining what each agent is allowed to access, what tools it can use, and what actions require human approval), operational governance (audit logging of all agent decisions and actions, monitoring of agent behavior against defined performance and risk metrics, anomaly detection when agents behave outside expected parameters), and regulatory governance (ensuring agent behavior complies with applicable regulations EU AI Act, HIPAA, PCI DSS, SEC guidelines including the documentation, explainability, and human oversight requirements these frameworks specify).

The architectural implementation of governance centers on the guardian agent model: a dedicated monitoring agent that observes the actions of primary agents, applies policy rules to those actions, flags potential violations, and escalates to human oversight when primary agents reach decisions outside their operational boundaries. This pattern decouples governance from business logic governance rules can be updated without modifying the underlying agents, and governance violations are surfaced through a consistent channel regardless of which agent generated them.

The key governance principle that distinguishes production-reliable agentic systems from ones that generate liability: every consequential agent action must be traceable, with sufficient context logged to reconstruct the agent’s reasoning at the time of the decision. Regulators and auditors will ask for this. Building audit infrastructure after a regulatory inquiry is not a viable compliance strategy.

For enterprises building agentic AI architecture and looking for guidance on governance design, security architecture, or regulatory compliance integration.