What this guide covers: A research-backed, six-pillar framework for evaluating any tech firm's genuine AI readiness; including a practical scorecard, 20 due-diligence questions, and the critical red flags that separate AI leaders from AI performers. Sourced from BCG, Deloitte, McKinsey, Riviera Partners, OvalEdge, RadixWeb, Bright Horizons, and 20+ additional 2025–2026 research sources.

Every tech firm in 2026 claims to be AI-powered. The question is: which ones actually are?

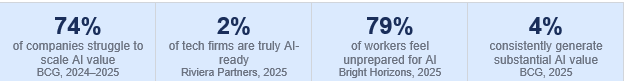

According to BCG's research surveying 1,000 senior executives across 59 countries, 74% of companies are still struggling to achieve and scale value from AI; despite years of investment, pilot programs, and hiring. Only 26% have developed the capabilities to move beyond proof-of-concept. And at the very top, just 4% are consistently generating substantial value across functions.

Riviera Partners' Future of Tech Leadership report drives the point home: only 2% of tech companies are fully prepared for the leadership and structural demands of real AI implementation.

These are not small organizations making rookie mistakes. These are global enterprises with billion-dollar budgets and world-class engineering teams. For CTOs, CIOs, founders, investors, and enterprise decision-makers evaluating technology partners or vendors in 2026, learning to separate genuine AI readiness from polished AI theater has become one of the most valuable business skills you can develop.

Why 'AI-Ready' Has Become the Most Overused Label in Tech

Walk through any enterprise technology conference in 2026 and count how many vendors use the words 'AI-ready,' 'AI-native,' or 'AI-first.' You will lose count before lunch.

The inflation of the AI-readiness label is not just a marketing problem; it is a business risk. Organizations that partner with or invest in firms performing AI theater rather than delivering AI value face implementation failures, budget waste, and competitive setbacks that take years to recover from.

Several dynamics are driving this problem in 2026:

- Gartner predicts 60% of AI projects will be abandoned if the data behind them is not truly AI-ready

- Only 17% of workers use AI frequently at work today; revealing the massive gap between tool availability and workforce integration (Bright Horizons Education Index 2025)

- 79% of employees feel unprepared to use AI at work, and 65% report their employer has provided no AI training at all

- BCG AI leaders generate 1.7x higher revenue growth and 3.6x higher three-year total shareholder return than less mature peers; creating powerful financial incentives to exaggerate readiness claims

The real question organizations must answer is not 'are you doing AI?' but 'are you structurally prepared to let AI operate inside your business?' Most cannot answer yes.: Sweep.io Enterprise AI Readiness Brief, 2026

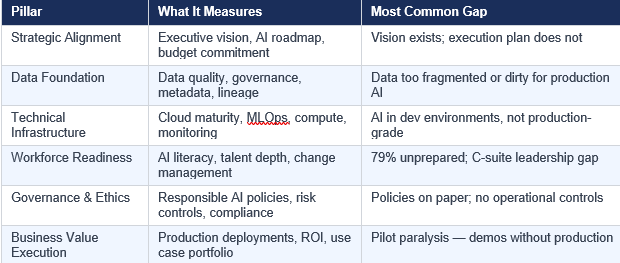

The Six Pillars of Genuine AI Readiness

A proper AI readiness assessment in 2026 does not evaluate whether a firm uses AI tools. It evaluates whether the firm has built the foundational conditions that allow AI to move from experimentation into production, and from production into measurable business value. Those conditions fall into six pillars:

According to Riviera Partners' Future of Tech Leadership research, 62% of tech leaders say AI is 'very important' to their enterprise strategy; but strategic importance does not equal executional readiness. The gap between the two is where most organizations fail.

Genuine strategic alignment in 2026 is not measured by a CEO's enthusiasm or the presence of an AI slide in the investor deck. It is measured by organizational structure, resource commitment, and accountability architecture.

Green Lights — Signals of Genuine Strategic Alignment

- A named Chief AI Officer or VP of AI reporting directly to the CEO; Riviera Partners' research shows high-readiness organizations are more likely to have direct CEO reporting lines; when absent, the role tends to become symbolic

- An AI roadmap reviewed and approved at the board level with quantified OKRs linked to business outcomes

- A dedicated AI/ML budget line item representing a multi-year commitment, not a pilot allocation

- An AI Steering Committee with representation from product, engineering, data, operations, compliance, and finance

- Board members who receive regular AI governance updates and ask accountability questions about outcomes, not just initiative status

Red Flags — Signals of Strategic Theater

- AI strategy described entirely in marketing language with no operational metrics or timelines

- AI ownership resting exclusively in IT or a skunkworks innovation lab with no cross-functional business involvement

- 'AI strategy' that amounts to a list of tools the company has purchased or plans to purchase

- No separation between AI innovation budget (for experiments) and AI transformation budget (for production systems)

- The executive team cannot name two or three specific AI deployments delivering measurable ROI today

Pillar 2 — Data Foundation: The Most Brutally Honest Indicator

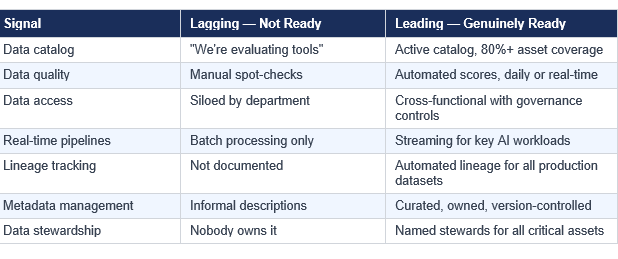

Every credible source examining AI readiness in 2026 reaches the same conclusion: data quality is the number one predictor of AI success or failure. OvalEdge's comprehensive assessment research documents that 67% of organizations cite data quality issues as their top AI readiness challenge. The Drexel LeBow/Precisely State of Data Integrity and AI Readiness 2026 report found that nearly 70% of data leaders say their data is not clean or trustworthy enough for AI.

This is the pillar where the most revealing conversations happen, because data maturity cannot be faked in a short evaluation process. You can claim to have a data strategy. You cannot fake having clean, governed, production-ready data.

What Genuine Data Readiness Looks Like

- An active data catalog with documented ownership for 80% or more of critical data assets (OvalEdge, Atlan, Collibra, or equivalent)

- Automated data quality scoring with daily or real-time updates; not manual spot-checks that rely on individual heroics

- Data lineage tracking across all datasets used in production AI systems; answering 'where did this training data come from?'

- A business glossary ensuring consistent data definitions across teams; OvalEdge research identifies this as giving everyone 'the same data language'

- Real-time data pipelines feeding time-sensitive AI applications, not batch updates that introduce hours of latency

The Practical Test That Reveals Everything

Ask this specific question: 'Can you show us one data product; a specific dataset used to train or operate a production AI system; with documented ownership, quality score, update frequency, and end-to-end lineage?'

A genuinely AI-ready firm answers immediately with specifics and documentation. A firm at Stage 1 or 2 will describe their data governance roadmap, reference the tool they are evaluating, or tell you about a dataset that exists in a pilot environment.

Pillar 3 — Technical Infrastructure: Can the System Actually Scale?

Research across enterprise organizations found that 64% of enterprises lack the architecture required for reliable AI operations. Organizations have the intent and even some of the talent, but their underlying technical architecture was never designed to support enterprise AI workloads.

Cloud readiness is now the baseline. With 85% of enterprises using multi-cloud strategies, the question is not whether a firm uses cloud, but whether they have cloud infrastructure purpose-built for AI. AWS SageMaker, Azure Machine Learning, and Google Vertex AI represent the 2026 standard for production AI infrastructure.

The MLOps Differentiator — The Single Clearest Technical Signal

MLOps (Machine Learning Operations) applies DevOps principles to machine learning systems. Organizations with mature MLOps pipelines can build, test, deploy, monitor, and retrain AI models in automated, reproducible, auditable workflows. Organizations without MLOps are running a manual craft operation — every deployment is a unique, labor-intensive event.

A mature MLOps practice in 2026 includes:

- Version control for models, training datasets, features, and configurations; reproducibility is mandatory

- Automated CI/CD pipelines that test and deploy model updates without manual intervention at each step

- Staging environments that faithfully replicate production conditions before any model goes live

- A/B testing infrastructure to evaluate new model versions against the currently deployed baseline

- Real-time performance monitoring with automated alerts for accuracy drift, data drift, and latency degradation

- Automated retraining pipelines triggered by detected performance degradation or incoming distribution shift

Infrastructure Red Flags

- Primary AI workloads running on legacy on-premises infrastructure with no cloud migration plan

- No dedicated GPU or TPU resources allocated to AI training or inference

- Data scientists deploying models manually, one at a time, directly to production

- No monitoring system for models in production; a firm may not know when its AI has stopped working

- AI infrastructure treated as a development sandbox rather than a production system with SLAs

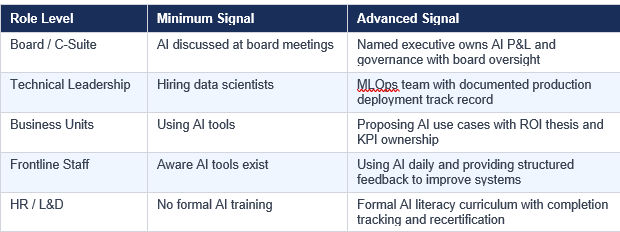

Pillar 4 — Workforce Readiness: The Human Crisis Nobody Wants to Talk About

The Bright Horizons 2025 Education Index survey of 2,017 US workers contains a number that should alarm every technology executive: 79% of employees feel unprepared to use AI at work. Even more troubling, 65% say their employer has provided no AI training at all, and 42% say their employer expects them to learn AI entirely on their own.

McKinsey's 2025 AI in the Workplace report identifies the root cause: C-suite leaders are more than twice as likely to say employee readiness is a barrier to adoption as they are to acknowledge their own leadership role. The real barrier is leadership; not the workforce.

'Readiness has little to do with which models or platforms a company uses. It has everything to do with whether leaders can connect technical capability to commercial outcomes.'; Riviera Partners, AI Hiring Blueprint 2026

Layer 1: Technical AI Talent

- Data scientists who deploy models to production; not just notebooks. Evaluate their production model count, not their research portfolio

- ML engineers who build and maintain MLOps pipelines. The ratio that matters: ML engineers to data scientists. Production-focused teams have approximately 1:1 or 2:1

- Data engineers who architect the data infrastructure that feeds AI systems reliably at scale

- AI architects who design enterprise-scale systems with governance, monitoring, and failover by design

Layer 2: AI-Literate Business Users

Deloitte's State of AI in the Enterprise found that 53% of leading organizations are actively educating their broader workforce on AI fundamentals. AI implementation consistently fails when business users cannot interpret AI outputs, identify errors, or propose high-value use cases for their domain.

Layer 3: Change Management Infrastructure

Cisco's AI Readiness Index shows that 91% of Pacesetters; the top tier of AI-ready organizations; have comprehensive change management plans, compared to just 35% of all companies surveyed. This 56-percentage-point gap is one of the most dramatic differentiators in the entire research base.

Pillar 5 — Governance and Responsible AI: The 2026 Compliance Imperative

Governance has crossed the threshold from nice-to-have to board-level obligation. The EU AI Act is in enforcement phase for high-risk systems. OvalEdge's research on AI data governance documents that 51% of Chief Data Officers ranked data governance as their top 2025 priority. The OWASP AI Maturity Assessment project provides a rigorous open framework for evaluating how deeply governance controls are embedded across an organization's AI lifecycle.

What Mature AI Governance Looks Like Operationally

- A Responsible AI policy that covers data use, model fairness, explainability, human oversight, and incident response; and has actually been applied to real AI systems, not just published

- Model cards for every production AI system documenting purpose, training data sources, performance characteristics, known limitations, and applicable use restrictions

- A formal AI risk register tracking identified risks, likelihood, potential impact, mitigation owner, and remediation timeline

- Regular bias audits with documented findings, remediation actions taken, and verification that remediations worked

- An AI incident response playbook defining what happens when an AI system produces harmful, biased, or non-compliant outputs

Agentic AI Governance — The 2026 Frontier

Apptunix's enterprise AI trends analysis documents Gartner's forecast that 40% of enterprise apps will include task-specific AI agents by 2026. Only 24% of organizations have the infrastructure to control agent actions with proper guardrails and live monitoring; creating a significant governance gap precisely when agentic AI adoption is accelerating fastest.

For firms deploying agentic systems, evaluate:

- Defined action boundaries specifying exactly what each agent can and cannot do autonomously

- Human approval checkpoints for consequential decisions — not just triggered on errors

- Real-time behavioral monitoring of agent activity with automatic suspension triggers

- A complete audit trail of every agent action for compliance and debugging purposes

- Tested rollback procedures for when agent outputs are discovered to be problematic

Governance Red Flags

- A published Responsible AI policy with no evidence it has been applied to any actual AI project

- AI systems in production with no model cards or documented risk assessments

- No formal process for employees or users to report AI errors or ethical concerns

- Compliance and legal teams not involved in AI project approval processes

Pillar 6 — Business Value Execution: The Final and Decisive Test

All five preceding pillars exist for one purpose: enabling AI that reliably delivers measurable business value at enterprise scale. BCG's 2025 widening AI value gap research found that truly AI-ready firms generate 1.7x higher revenue growth, 3.6x higher three-year total shareholder return, and 2.7x higher return on invested capital compared to less mature peers. These are not marginal differences; they represent compounding competitive separation that widens every year.

The Production AI Diagnostic — The Single Most Revealing Test

Ask for a list of AI systems currently running in production. Not planned. Not in development. Not in staging. Not in a pilot with three users. Production; live systems serving real users, integrated with core business processes, with defined SLAs and performance metrics.

A Stage 3 firm will have 3 to 5 such systems. A Stage 4 firm will have 10 or more. A firm performing AI theater will have an impressive list of pilots and a defensive explanation for why none are in production yet.

BCG's AI leadership framework identifies the 70-20-10 resource allocation model: 70% of resources to people and processes, 20% to technology, 10% to algorithms. Organizations that invert this ratio; spending most on tools while underinvesting in people and processes; build foundations that will not support production AI at scale.

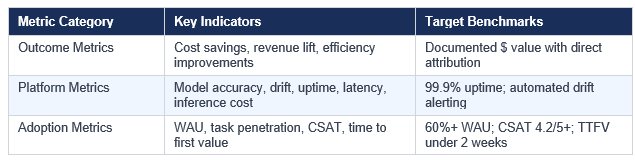

The ROI Measurement Framework

Genuinely AI-ready firms track three distinct categories of AI performance:

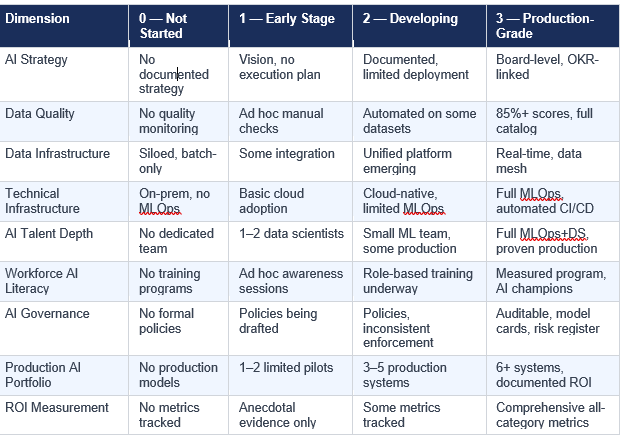

The AI Readiness Scorecard: Score Any Tech Firm in 30 Minutes

Use this scoring framework when evaluating any tech firm's AI readiness. Score each dimension from 0 to 3 based on verifiable evidence; not stated intentions.

Interpreting Your Score

- 0 to 8 points: Stage 1–2 maturity. AI claims are aspirational. High implementation risk.

- 9 to 16 points: Stage 3 maturity. Operational but limited. Specific use case success is possible; enterprise-scale deployment is not yet viable.

- 17 to 22 points: Stage 4 maturity. Genuine, verifiable capability. Validate production AI claims and governance controls before commitment.

- 23 to 27 points: Stage 5 maturity. Elite readiness. Rare in 2026. Organizations at this level typically have public case studies and auditable evidence.

20 Questions That Separate AI Leaders from AI Performers

These questions are designed to generate answers that cannot be prepared or faked without genuine capability. The specificity and confidence of responses will tell you more than any marketing material or reference call.

On Data Foundation

- What is the automated data quality score for the primary dataset feeding your most important production AI system?

- What percentage of your data assets have documented ownership and are cataloged in your data governance platform?

- How does your team detect and resolve data drift; the gradual change in data distribution that degrades model performance?

- What is your process for reviewing training data to identify and mitigate potential sources of bias before model training?

On Technical Infrastructure

- How many AI models are currently running in production? Can you name them and describe what they do?

- Walk us through your model deployment process from the moment a data scientist finishes training to production traffic serving.

- What is your average elapsed time from model training completion to production deployment?

- How does your team know when a production AI model needs to be retrained? What triggers that process?

On Workforce

- What is your ratio of ML engineers to data scientists, and how does that reflect your production focus?

- What does your AI literacy curriculum include for non-technical employees, and what are your completion rates?

- How do business units propose and prioritize new AI use cases in your organization?

On Governance

- Can you share a model card for any of your current production AI systems?

- How do you conduct bias audits on production models, and what remediation actions have you taken based on findings?

- What is your process for human review of AI decisions with significant consequences for individuals or the business?

- How are your AI systems structured to comply with the EU AI Act, and which risk tier does your most consequential AI application fall under?

On Business Value

- What is the total financial value; cost savings plus revenue impact; you can directly attribute to AI in the past 12 months?

- Which AI pilot in the last 18 months failed to reach production, and what did you learn from that failure?

- How do you currently measure AI adoption among the intended users of each deployed system?

- What are the specific business KPIs your most recently deployed AI system was built to improve, and what has changed since deployment?

- What is your plan for AI capability development over the next 24 months, and what investment has been committed to execute it?

The Six Most Expensive AI Readiness Mistakes

Mistake 1: Purchasing AI tools before establishing data foundations. The result is that every AI initiative immediately encounters data quality failures that no tool can solve. Symptom: impressive AI tool stack, zero production models.

Mistake 2: Pilot paralysis; the endless experiment loop. BCG found that the fundamental challenge for 74% of companies is moving beyond proofs of concept. These organizations have multiple active pilots consuming budget and talent without producing production systems.

Mistake 3: AI built on legacy data architecture. Bolting AI onto data systems not designed for intelligent workloads creates compounding failures. Symptom: AI demos work in controlled conditions; production performance is erratic and impossible to debug.

Mistake 4: Treating AI as an IT project without business ownership. McKinsey identifies leadership alignment as the top operational headwind to AI adoption. Symptom: technically impressive AI with minimal business user adoption.

Mistake 5: Confusing AI governance documents with AI governance operations. Governance without operational controls is not governance; it is liability documentation. Symptom: polished AI ethics framework with no model cards, no risk register, no evidence the policy has ever affected a deployment decision.

Mistake 6: Neglecting change management entirely. Cisco's Pacesetters invest in change management at 2.6x the rate of average organizations. Symptom: AI tools deployed organization-wide, but weekly active usage below 30% of eligible users six months post-launch.

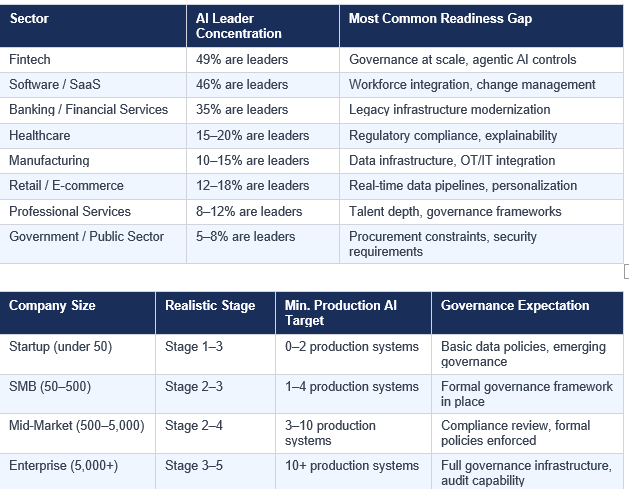

AI Readiness by Industry and Company Size

BCG's sector research identifies significant variation in AI readiness. Fintech leads with 49% of companies classified as AI leaders. Software follows at 46%, and banking at 35%.

Three 2026 Landscape Shifts That Redefine What Readiness Means

1. Agentic AI Has Moved from Research to Enterprise Deployment

Apptunix's enterprise AI trends research documents Gartner's forecast that 40% of enterprise apps will include task-specific AI agents by 2026. These agents; systems that autonomously plan and execute multi-step workflows; require a categorically different governance model than conventional AI. Only 24% of organizations have adequate controls for agentic systems. The firms not ready for agentic AI in 2026 will be operating at a structural disadvantage as agent-driven productivity becomes the competitive baseline.

2. Return on AI Investment Has Replaced 'AI Adoption' as the Board Metric

Boards and CFOs have ended their patience with investment narratives that promise future returns. BCG's research establishes that AI leaders focus on 'meaningful outcomes on cost and topline' rather than 'diffuse productivity gains.' Sweep.io's enterprise brief articulates the new standard: AI initiatives must be measured by revenue, cost reduction, or risk reduction; not by installation milestones.

3. The Data Activation Gap Is Becoming a Structural Competitive Moat

Atlan's research on AI-ready data identifies a growing divide between organizations that have data and organizations that can actually use their data for AI. The Modern Data Report 2026 found that finding and confirming data consumes more time than actually using it. Organizations that have solved this through unified access, embedded governance, and automated cataloging have built a competitive advantage that is genuinely difficult for laggards to close.

Conclusion: AI Readiness Assessment Is Now a Core Business Competency

In 2026, the most consequential business skill for technology executives, investors, board members, and enterprise decision-makers is not understanding AI algorithms. It is accurately evaluating AI readiness; distinguishing organizations that have built the foundational conditions for AI-driven value from those performing AI theater at scale.

The research from BCG, Deloitte, McKinsey, Riviera Partners, OvalEdge, Bright Horizons, Apptunix, RadixWeb, Sweep.io, and 20+ additional sources converges on a single conclusion: the firms generating extraordinary returns from AI are not the ones with the most sophisticated models or the largest budgets. They are the ones that invested in the right foundations; clean data, strong governance, production-grade infrastructure, AI-literate workforces, and executive accountability structures that connect AI capability to business outcomes.

The six-pillar evaluation framework in this guide gives you a systematic, evidence-based tool for making that assessment in any context; vendor evaluation, partnership due diligence, acquisition review, or internal capability audit. The scorecard converts observable signals into objective benchmarks. The 20 questions elicit answers that reveal genuine capability or expose its absence.

Use these tools consistently, and you will move beyond the AI-ready illusion to identify the firms that are genuinely prepared to deliver enterprise-level value in 2026 and beyond.

Frequently Asked Questions

How long does a thorough AI readiness assessment take for a mid-to-large tech firm?

A focused executive-level evaluation using this guide's framework takes 2 to 4 hours of structured conversation with the right stakeholders. A comprehensive technical and organizational assessment; including data audits, infrastructure reviews, talent analysis, and governance reviews; takes 4 to 8 weeks for a mid-size organization and 6 to 12 weeks for an enterprise.

What is the single most important indicator of genuine AI readiness?

Production AI models delivering documented, measurable business outcomes. Not pilots. Not demos. Not planned deployments. Live systems operating in production environments, serving real users, with defined performance standards and measured business impact. If a firm cannot point to at least two or three such systems, every other readiness claim requires proportionally higher scrutiny.

Can a small tech startup be genuinely AI-ready?

Yes, with appropriate calibration. A 30-person engineering team can be genuinely AI-ready if they have a clean, governed data foundation, at least one system in production, basic MLOps practices enabling reproducible deployments, and a clear AI strategy tied to specific business outcomes. Readiness is fundamentally about capability relative to organizational scale, not about matching enterprise governance infrastructure.

What is the difference between AI readiness and AI maturity?

AI readiness describes whether an organization has the foundational conditions to successfully adopt and deploy AI; essentially, can they get AI into production? AI maturity describes how sophisticated and business-aligned those AI capabilities are; essentially, how well is their AI working relative to best practice? Readiness is the prerequisite; maturity is the measure of how far they have progressed after establishing it.

What certifications or standards are meaningful indicators of AI readiness?

ISO 42001 (AI Management Systems Standard) is the primary emerging international standard for AI governance. SOC 2 Type II certification indicates data security and process maturity that supports AI readiness. The OWASP AI Maturity Assessment framework provides a structured open-source approach to governance evaluation. However, certifications document that processes exist; they do not guarantee those processes are effective. The 20 questions in this guide will reveal effectiveness more reliably than any certificate.

What are realistic cost ranges for professional AI readiness assessment services?

Professional AI readiness assessment services range from approximately $30,000 to $75,000 for a focused midmarket evaluation, and from $100,000 to $300,000 for comprehensive enterprise-wide assessments including deep technical reviews, data audits, and governance analysis. The total cost of a failed AI partnership or implementation consistently exceeds assessment costs by orders of magnitude.

Is there a quick screening question that immediately reveals whether a firm is AI-ready or AI-aspirational?

Ask: 'Which specific AI system currently running in production is delivering the most business value, and what metrics demonstrate that?' A genuinely AI-ready firm answers immediately with specifics; the system, the use case, the metrics, the improvement since deployment. A firm performing AI theater will pause, redirect to a pilot, or describe a system that turns out to be in staging. That single response quality tells you more than an hour of polished presentations.