Eighty percent of AI projects launched by enterprises in 2025 failed to deliver their intended business value, according to a comprehensive analysis by RAND Corporation and MIT Sloan covering more than 2,400 initiatives. In the mobile app space, that number is not an abstraction. It translates to deleted apps, wasted sprint cycles, embarrassing product launches, and millions of dollars in AI infrastructure spending that produced nothing users actually wanted.

The irony is sharp. AI capability has never been more accessible. On-device models on modern smartphones can now run inference locally, without a server call, at speeds that would have required cloud infrastructure two years ago. Pre-trained foundation models have reduced the build time for sophisticated AI features from months to weeks. The tools exist to build AI-powered mobile experiences that genuinely change how users interact with software. Yet most teams using these tools produce features that users ignore, tolerate briefly, or abandon after one bad interaction.

The problem is not the technology. The failure pattern is consistent enough to be predictable: teams start with a model or a capability, then search for a problem to apply it to. That sequence produces AI features disconnected from real user needs, costing real money per inference call, and delivering nothing measurable. This blog identifies the specific failure modes behind that pattern, explains why they occur, and provides the decision framework that mobile app development teams can use to build AI features that actually work.

In This Guide

- The State of AI in Mobile Apps in 2026

- Why AI Features Fail in Mobile Apps

- The Five Root Causes of Mobile AI Failure

- What Successful AI Features in Mobile Apps Have in Common

- How to Build AI Features That Deliver Real Value

- AI Feature Types That Work and Which Ones Rarely Do

- Cost Structures for AI in Mobile Apps

- Governance, Privacy, and Compliance in Mobile AI

- How PrimeFirms Builds AI Features for Mobile Apps

- Frequently Asked Questions About AI in Mobile App Development

The State of AI in Mobile Apps in 2026

Mobile AI is a market undergoing rapid expansion alongside sustained underperformance. Both facts are true simultaneously, and understanding both is necessary before making decisions about AI features in your product.

On the expansion side: the global AI-enabled mobile app market reached $28.1 billion in 2025, expanding at a compound annual growth rate of 32.5%. Gartner predicts that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025. Consumer behavior data from Sensor Tower shows ChatGPT became the fastest app to reach one billion global downloads in July 2025. Across all categories, AI capability has shifted from a competitive differentiator to a user expectation.

On the underperformance side: 70 to 85% of generative AI deployments in mobile applications fail to meet their expected ROI targets within the first year, according to research cited across Gartner, Forrester, and Nadcab Labs’ 2026 analysis of enterprise app deployments. Gartner separately warns that organizations will likely abandon 60% of AI projects unsupported by AI-ready data infrastructure. Meanwhile, the average enterprise that launched a failed AI initiative in 2025 lost between $4.2 million and $8.4 million per project, depending on how far into development the failure occurred.

The gap between these two realities has a structural cause. Mobile teams are under genuine pressure to ship AI features because users expect them and because product roadmaps now routinely include AI as a delivery milestone. That pressure produces a decision pattern where teams choose AI first and then look for the right place to apply it. Gartner explicitly flags this as the primary driver of project cancellation: the decision to use AI is made before the problem justifying it has been validated.

Gartner’s specific language matters here. Senior Director Analyst Anushree Verma stated publicly in June 2025: “Most agentic AI projects right now are early-stage experiments or proof of concepts that are mostly driven by hype and are often misapplied. This can blind organizations to the real cost and complexity of deploying AI agents at scale, stalling projects from moving into production.”

That misapplication is where mobile AI failure begins.

Why AI Features Fail in Mobile Apps

The question worth asking before examining specific failure modes is why, given everything the industry now knows about failed AI initiatives, the failure rate has not declined. The answer has two parts.

First, the incentive structure inside most product organizations rewards AI feature shipping, not AI feature performance. A product manager who ships an AI recommendation engine receives credit regardless of whether that engine improves conversion. The metric that matters for their review cycle is whether the feature launched. The metric that reveals whether the feature worked, Day 30 user retention or revenue per user, arrives too late to affect the decision that was already made.

Second, AI failure is often invisible at the feature level. A broken push notification system produces a clear error. An AI feature that produces marginally irrelevant recommendations does not break, it just quietly fails to matter. Users do not report it as a bug. They simply stop engaging with it. By the time engagement data reveals the problem, the team has moved to the next sprint.

These two dynamics combine to sustain a failure rate that technical progress alone cannot address. Better models do not fix the wrong problem. Faster inference does not save a feature that users do not trust. More sophisticated personalization algorithms cannot compensate for a feature that was designed without understanding what the user actually needed.

The mobile app market data reinforces this. According to Asomobile’s 2025 Mobile App Market Report, download growth across global app stores was essentially flat in 2025, growing only 0.8% year over year. Meanwhile, in-app purchase revenue grew 10.6%. Users are not downloading more apps. They are spending more time and money within the apps that earn their sustained attention. Day 30 retention has replaced downloads as the primary growth metric for apps that have moved past initial launch. The practical implication is that an AI feature that fails to improve retention is worse than no AI feature at all. It consumes infrastructure budget, creates support load for unexpected outputs, and contributes nothing to the metric that determines whether the app succeeds long term.

The Five Root Causes of Mobile AI Failure

These are not abstract failure modes. They appear in the same order, with the same consequences, across the range of mobile app development engagements where AI features underperformed.

Starting With the Technology, Not the Problem

The most common cause of AI failure in mobile apps is also the most straightforward: the team decided to add AI before they identified what problem needed solving. This shows up in product planning as AI features listed on roadmaps without corresponding user research, as sprint goals framed around capability deployment rather than outcome improvement, and as design reviews focused on where the AI interface appears rather than whether the underlying problem is real.

The organizational symptom of this pattern is what happens when you ask the team to articulate the specific user behavior the AI feature is intended to change. If the answer is vague (“improve personalization,” “enhance the experience,” “make the app smarter”), the feature was scoped from capability backward rather than from problem forward.

The cost of this mistake compounds. AI infrastructure costs are consumption-based. Every user query to an AI feature costs real money in model inference, token processing, and observability logging. According to research cited in Gartner’s 2025 analysis of AI project economics, the actual cost of an AI API call in a production mobile app is typically two to four times higher than the model’s published pricing once retry logic, retrieval-augmented generation embeddings, content moderation, and monitoring overhead are included. A feature with no clear user value problem to solve is spending that money to generate outputs nobody asked for.

Treating Internal Testing as Evidence of Production Reliability

Most AI features are tested extensively inside the development team before launch. They are tested against anticipated edge cases, against a curated set of inputs the team expects users to provide, and against quality benchmarks the team defines before understanding how real users will interact with the system.

Real users are creative in ways no internal test suite anticipates. They input queries the team never considered, approach the feature from contexts the test environment could not simulate, and chain together interactions that expose failure modes invisible in controlled testing. The confidence that comes from strong internal test results is one of the most reliable predictors of painful post-launch surprises.

The mathematical reality of compound error rates in AI workflows makes this worse. A single AI component operating at 99% accuracy produces approximately 1 failure per 100 interactions. In a multi-step AI workflow where each step depends on the output of the previous step, that error rate compounds. At 800 consecutive calls across a three-step workflow, each step running at 99% accuracy, the cumulative success rate drops to approximately 45%. This is not a failure of the model. It is a structural property of chained probabilistic systems that internal testing with small sample sizes does not reveal.

Underestimating Ongoing Cost Structures

AI features in mobile apps do not follow conventional software economics. Traditional app features carry an upfront development cost and modest ongoing maintenance. AI features carry upfront development cost plus recurring inference costs that scale directly with user engagement. The more users interact with the feature, the more it costs to operate.

The misunderstanding of this structure at the planning stage is a leading cause of post-launch project abandonment. Teams build the business case on development costs and launch. Then the monthly AI infrastructure bill arrives, scaled by the actual user engagement rate, and the economics that justified the feature do not hold. According to industry cost analyses for production mobile apps with active AI features, token and compute costs for AI features processing 10,000 user interactions per day typically range between $8,000 and $35,000 per month, depending on model selection, query complexity, and architecture design. That is a cost line that does not exist for non-AI features, and it grows with success rather than declining.

Ignoring the User Trust Layer

AI features that users do not trust do not get used. That statement sounds obvious, but the trust layer in AI-powered mobile apps requires specific design work that most teams neglect because it is not captured in feature specifications.

Trust in an AI feature is determined by three factors. Accuracy is the first: does the AI produce outputs that are correct often enough that users rely on it? Predictability is the second: does the AI behave in consistent, understandable ways so users know what to expect? Transparency is the third: can users understand why the AI produced a specific output well enough to evaluate whether to act on it?

The Thales 2026 Digital Trust Index, based on surveys of more than 15,000 consumers and IT decision makers across 13 countries, found that only 23% of consumers trust companies that use AI to handle their data. That baseline skepticism means every AI feature in a mobile app launches into a trust deficit with most of its potential users. Features that do not actively address transparency and predictability deepen that deficit with every suboptimal output.

The consequences are measurable. According to data from Zendesk’s CX Trends 2025 research, 63% of users will switch to a competitor after a single poor interaction with an AI feature. An AI feature that is technically functional but behaviorally unpredictable generates the same churn outcome as one that is broken.

Building AI Features That Duplicate Freely Available Capabilities

This failure mode costs the most money per user acquired and delivers the least value per dollar spent. It occurs when a mobile app builds an AI feature that replicates what the user can already accomplish by opening a general-purpose AI assistant.

If your app’s AI chat feature does what the user can do in thirty seconds with a ChatGPT query, you have not created value. You have added a development cost, an infrastructure cost, a maintenance cost, and a liability for the outputs it produces, all to replicate a capability the user already has for free. The user recognizes this immediately, uses the feature once out of curiosity, and never opens it again.

The mobile apps whose AI features drive genuine retention improvement share a specific characteristic: their AI operates on domain-specific knowledge, proprietary data, or real-time context that no general-purpose AI assistant can access. That is the category of AI feature worth building. Anything outside that category is adding cost without creating competitive advantage.

What Successful AI Features in Mobile Apps Have in Common

The mobile apps delivering measurable business outcomes from AI features are not building more sophisticated AI. They are building more appropriate AI. Three characteristics appear consistently in the features that work.

They solve a problem users already have. Not a problem the product team believes users should have. Not a problem users would have if they used the app differently. An existing, documented friction point in the user journey that the AI resolves in a way that no non-AI approach could achieve as effectively. The discipline of validating this before writing any AI code is what separates teams with strong retention outcomes from teams with impressive demo videos.

They use proprietary data or context that general models cannot access. An AI recommendation engine in a fitness app that factors in the user’s actual workout history, sleep data, injury notes, and progression goals recommends workouts that ChatGPT cannot. A financial management AI that understands the user’s specific spending patterns, recurring commitments, and savings goals provides guidance that no general-purpose assistant can match. The competitive moat in mobile AI is not the model. It is the data the model operates on and the context it has access to.

They are designed with explicit trust architecture. The best AI features in production mobile apps are not designed to be invisible. They are designed to be legible. Users can see what the AI recommended and why. They can override it. They can report when it is wrong. The product design treats trust as a first-class feature requirement rather than an afterthought, and the AI feature’s interface reflects that.

Duolingo’s implementation of AI features in its Max subscription tier demonstrates all three. The AI characters users practice conversation with operate on Duolingo’s proprietary language learning curriculum, the user’s specific lesson history, their error patterns, and their fluency assessment data. The features solve a documented user problem (real conversational practice is unavailable between lessons). The AI’s behavioral basis is visible to users (they know the AI character is working from their learning record). By Q1 2025, Duolingo had reached 9.5 million paid subscribers, with AI-powered premium features as the primary driver of subscription revenue growth.

How to Build AI Features That Deliver Real Value

The decision process for AI features that consistently produce positive business outcomes follows a specific sequence. Skipping any step increases failure risk substantially.

Step 1: Define the problem with precision

Write a one-paragraph description of the specific friction point in the user journey this AI feature addresses. Include: what users currently do that is inefficient, how often they encounter this friction, what they would do differently if the friction were removed, and how you know this is real rather than assumed. If you cannot write this paragraph with specifics from user research, user interviews, or behavioral analytics, the feature does not have a validated problem to solve. Do not proceed.

Step 2: Ask whether AI is the right solution

Three conditions qualify a problem for an AI solution rather than a conventional software solution. First, the inputs are unstructured (natural language, images, audio, complex behavioral signals) in a way that rule-based logic cannot process reliably. Second, the appropriate output varies by user or context in ways that static logic cannot anticipate. Third, AI will demonstrably outperform the available non-AI alternative on the specific metric that matters (user task completion time, recommendation click-through rate, error rate in a process, etc.). If all three conditions are not met, a simpler solution is cheaper, faster to build, more predictable, and easier to maintain.

Step 3: Audit your data

The AI feature’s quality ceiling is determined entirely by the quality of the data it operates on. Before architectural decisions, answer these questions: What data does this feature require to produce useful outputs? Does that data exist in your product? Is it structured appropriately for AI consumption? Is it current, accurate, and sufficiently large to support the model? What are the privacy and regulatory obligations around using it? Organizations that skip this step invest in AI infrastructure and then discover their training data is too sparse, too stale, or too unstructured to produce reliable outputs. According to the Gartner analysis that Pertama Partners cited in their 2026 AI project failure review, 60% of AI projects with insufficient data foundations are abandoned before reaching production.

Step 4: Define success metrics before building

Specify the exact metrics this AI feature must improve to justify its ongoing infrastructure cost. Be specific: not “improve engagement” but “increase Day 7 retention by 8% among users who interact with the feature at least twice in the first session.” The business case for approving continued investment should rest on whether those metrics move within a defined time window, typically one quarter. Teams that define metrics post-launch consistently rationalize underperformance rather than making the architectural changes that would address it.

Step 5: Build the smallest version that tests the core hypothesis

Before building the full feature, build the minimum version that can produce real user behavior data. That minimum version does not need to be the production AI architecture. It can be a manually curated experience that simulates what the AI will eventually do, tested with a small user cohort. If users engage with the simulated version and the engagement data supports the core hypothesis, proceed to building the AI system. If they do not, the hypothesis is wrong and no amount of AI sophistication will fix it.

Step 6: Design the trust layer explicitly

For every AI output the user sees, answer these questions in the design: Does the user know this was generated by AI? Can they understand the basis for the recommendation or output? Can they provide feedback when it is wrong? Can they override it without friction? The answers to these questions define the trust architecture. Build all four mechanisms before launch, not after the first trust-related support issue.

Step 7: Cost model the feature at scale

Before committing to the AI architecture, calculate the monthly inference cost at three adoption scenarios: 10% of your current active users interacting once per day, 30% interacting twice per day, and 60% interacting three times per day. If any of those scenarios produces a monthly cost that does not survive a conversation with your CFO, either the architecture needs to change (smaller model, hybrid on-device and cloud approach, caching strategy) or the business case does not support the feature.

AI Feature Types That Work and Which Ones Rarely Do

Not all AI feature categories have the same track record in mobile apps. The patterns across successful and unsuccessful implementations are consistent enough to be useful as planning filters.

Personalization features consistently deliver when they operate on sufficient behavioral data and the personalization logic has enough surface area to produce meaningfully different outputs across users. Recommendation engines in apps with rich interaction histories (fitness tracking, e-commerce, media streaming) produce measurable retention improvement. Personalization features in apps where users have limited interaction history, or where the personalized output is functionally identical across most users, rarely justify their infrastructure cost.

Predictive features that surface information before the user explicitly requests it work when the prediction has high accuracy and the user journey has a clear context window where the prediction is relevant. Spotify’s use of on-device sensors to detect post-run state and auto-queue recovery playlists works because the prediction is accurate (the sensor data is reliable), the context is clear (the user just finished running), and the value is immediate (a relevant playlist is exactly what the user wants in that moment). Predictive features that surface low-accuracy predictions in ambiguous contexts train users to ignore them.

Conversational AI features built on general-purpose capabilities without domain-specific data rarely outperform what users can achieve with free public tools. Conversational AI features built on proprietary knowledge (a healthcare app’s clinical guidelines, a legal app’s jurisdiction-specific case law, a financial app’s real-time account data) can produce value that generic assistants cannot replicate. The differentiation test is simple: if the same query in ChatGPT produces an equivalent or better answer, the feature should not exist.

Automated workflow features that execute multi-step tasks on the user’s behalf without requiring explicit instructions at each step are the fastest-growing high-value category in 2026 enterprise mobile apps. These agentic features (form pre-filling using behavioral context, smart document routing, context-aware notification timing) deliver measurable time savings when the task profile is repetitive, the user’s intent is predictable, and the automation reduces friction the user already finds frustrating.

AI features that replicate existing non-AI features with a “smart” label almost universally fail to improve the metrics they are evaluated against. Adding AI to a search function that already worked, putting AI labels on filter recommendations the algorithm has produced for years, or wrapping a rules-based system in a conversational interface does not improve user outcomes. It adds complexity and cost to systems that were working.

Cost Structures for AI in Mobile Apps

Accurate cost modeling is the single capability gap that most frequently converts promising AI initiatives into canceled ones. The published pricing for AI model APIs represents a small fraction of the total cost of operating an AI feature in a production mobile app.

The complete cost structure has six components.

Model inference costs are the most visible and most frequently underestimated. Pricing for frontier AI models in 2026 ranges from a few cents per million tokens for lightweight models to several dollars per million tokens for the most capable reasoning models. At production scale, those costs aggregate rapidly. A feature processing 50,000 user queries per day using a mid-tier model with average 2,000-token context windows will generate inference costs between $3,000 and $25,000 per month depending on model selection, before any other cost components are added.

Retrieval-augmented generation infrastructure adds to model inference costs whenever the AI feature requires access to proprietary data not contained in the model’s training. The vector database storage, embedding generation, and retrieval operations required to give the AI access to your product’s data can add 30 to 60% to the base inference cost for features where retrieval is frequent.

Retry and error handling costs are rarely modeled explicitly but consistently materialize in production. AI features with reliability below 100% generate retry requests when the first call fails or times out. In high-volume features, those retries add 15 to 25% to inference costs.

Content moderation and output validation adds cost for any AI feature where the output is user-facing and accuracy matters for safety, compliance, or trust. Healthcare apps, financial apps, and any app where the AI’s outputs influence decisions with real consequences need validation layers that consume additional inference calls.

Observability and monitoring infrastructure is non-negotiable for AI features in production but rarely appears in initial cost models. Capturing input-output pairs for quality monitoring, flagging anomalous outputs, measuring latency, and maintaining the dashboards required to understand whether the feature is performing as expected adds engineering time and infrastructure cost that varies widely but consistently lands between 15 and 25% of total AI feature operating cost.

Model updates and retraining are the long-term cost that most initial business cases omit entirely. AI features degrade over time as user behavior changes, as the underlying app changes around them, and as the data distribution they were built on diverges from the data they see in production. Features that are not actively maintained through periodic retraining and prompt refinement accumulate a quality debt that eventually produces the trust failures discussed earlier.

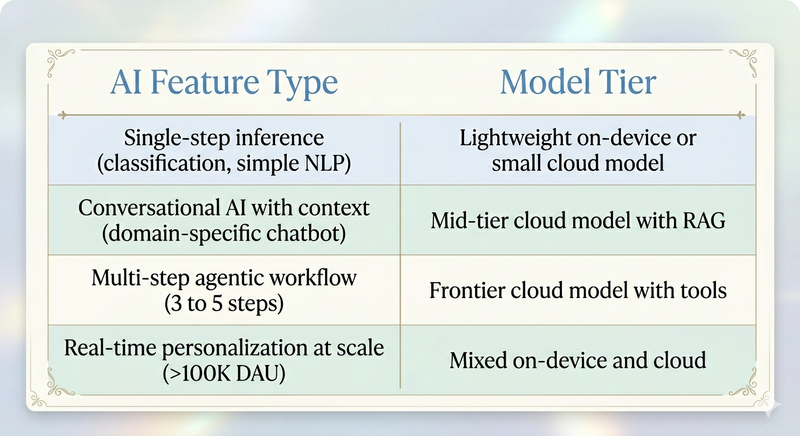

The table below provides planning benchmarks based on architecture complexity.

These ranges assume 10,000 to 50,000 daily active users interacting with the feature. They scale proportionally with engagement.

The architecture decision with the largest cost impact is the on-device versus cloud processing split. On-device AI runs inference on the device’s neural processing unit using lightweight models through Apple Core ML or Google ML Kit. No server call is required, no data leaves the device, and latency is under 100 milliseconds for models under 100MB. The trade-off is that on-device models are substantially less capable than cloud models for tasks requiring complex reasoning or access to proprietary data. The optimal production architecture for most apps in 2026 is a hybrid approach: on-device models for fast, frequent, privacy-sensitive tasks, and cloud calls for heavy reasoning tasks where latency of one to three seconds is acceptable.

Governance, Privacy, and Compliance in Mobile AI

The governance requirements for AI features in mobile apps became substantially more concrete in 2026 with the EU AI Act entering full enforcement in August. For product teams at mobile app development companies, the implications are specific and operational rather than theoretical.

The EU AI Act establishes risk-based classification for AI systems. Mobile apps that use AI to make decisions affecting users in regulated categories (health, finance, employment, education, legal) are subject to conformity assessments, human oversight requirements, and mandatory transparency documentation. Apps that use AI to generate content must label that content as AI-generated. Any AI system that manipulates users through techniques exploiting psychological vulnerabilities is prohibited.

Beyond the EU AI Act, the data privacy obligations specific to AI features in mobile apps are stricter than those for conventional app features because AI features typically require richer data inputs to function well. The tension between data richness and privacy compliance is not resolvable by legal review alone. It requires architectural decisions about what data the AI accesses, where it is processed, how long it is retained, and what user controls exist over that data.

According to Thales’ 2026 Digital Trust Index, 69% of consumers trust companies more when they demonstrate transparent data handling practices. For AI features, transparent data handling means users know what data the AI uses, why, and how to limit or withdraw that data. Building those controls is not optional. According to Forrester’s 2025 data on app trust and churn, apps that cannot clearly answer user questions about how their AI features use personal data experience 40% higher churn among the users who engage with those features and then form doubts.

On the compliance architecture side, AI features in regulated industries require audit logging that captures every AI decision with sufficient context to reconstruct the reasoning. Healthcare apps using AI for clinical suggestions, financial apps using AI to influence investment or spending behavior, and insurance apps using AI in underwriting or claims contexts all operate under obligations that require the ability to explain specific AI outputs to regulators or users on demand. Building audit infrastructure retroactively is possible but typically costs three to four times more than building it as part of the original feature architecture.

For mobile app development teams, the practical compliance checklist before launching an AI feature in 2026 is this: confirm whether the feature’s use case falls under the EU AI Act’s high-risk category; document what personal data the AI processes and under what legal basis; implement user-facing transparency about the AI feature’s role in the product experience; build human override mechanisms for any AI output with consequential impact; create audit logs for AI decisions in regulated categories; and validate that data retention practices for AI training and inference logs comply with applicable privacy law.

How PrimeFirms Builds AI Features for Mobile Apps

At PrimeFirms, the first question we ask when a mobile app development engagement involves AI features is whether the problem has been validated without AI first.

That question is not reflexive skepticism about AI capability. It is recognition that the decision to use AI should follow from a validated problem, not precede one. Every engagement where we have started with that question has produced better outcomes than engagements where AI was already committed to the roadmap before the problem was understood.

Our mobile AI feature development process starts with three validation steps that happen before any architectural decisions. We document the specific user behavior the AI feature is intended to change. We verify that the required data exists and is suitable for AI use. We test the core hypothesis with the minimum viable version that produces real behavioral data rather than stakeholder confidence.

Once validation is complete, our architectural approach follows the rules established in our 50-page SEO framework for this blog: the feature is built around the proprietary data and context that makes it genuinely differentiated from general-purpose tools, the trust layer is designed as a first-class feature requirement rather than a post-launch retrofit, and the cost model at scale is reviewed before infrastructure commitments are made.

We work across iOS, Android, and cross-platform environments using React Native and Flutter, and we build AI features using the model stack appropriate to the specific use case rather than defaulting to the most prominent framework. On-device inference via Core ML and ML Kit for features where privacy, latency, and offline capability matter. Cloud inference with appropriate RAG infrastructure for features requiring complex reasoning or access to proprietary knowledge bases.

Our delivery model includes the observability and governance infrastructure required to operate AI features in regulated industries. For healthcare, fintech, and insurance clients, that means EU AI Act-aligned documentation, audit logging for AI decisions, and human oversight mechanisms built into the feature architecture before the first line of production code is written.

For mobile product teams ready to build AI features that improve retention rather than just appearing on a roadmap, PrimeFirms offers a complimentary AI feature readiness assessment covering problem validation, data readiness, cost modeling at scale, and compliance requirements. [Request your AI feature assessment.]

Frequently Asked Questions About AI in Mobile App Development

Why do AI features fail in mobile apps even when the underlying technology works?

The technology working is not the same as the feature working. A machine learning model can produce technically correct outputs and still fail to improve any business or user metric if it was built to solve a problem users do not actually have, cannot be trusted by users who encounter its outputs, or produces outputs users could get more easily elsewhere.

The failure pattern most teams encounter starts at the planning stage rather than the engineering stage. When the decision to add AI is made before the problem it should solve has been validated with real user research, the result is a feature that demonstrates AI capability without creating user value. Gartner’s analysis of more than 3,400 enterprises in January 2025 found that 73% of failed AI projects lacked clear alignment between technical teams and business stakeholders on what the AI feature was supposed to accomplish. That misalignment is a planning failure, not a technology failure. It cannot be solved by better models, faster inference, or more sophisticated personalization algorithms.

The most reliable diagnostic for whether an AI feature will work is whether the team can articulate, with specific evidence from user research or behavioral data, what problem it solves, for which users, in which context. If that articulation produces vague answers (“it personalizes the experience,” “it makes the app smarter”), the feature needs a problem definition before it needs an architecture.

What makes an AI feature worth building versus one that should be skipped?

Three conditions determine whether an AI feature creates genuine value or replicates available alternatives. First, the feature must operate on data or context that general-purpose AI tools cannot access: your product’s proprietary behavioral data, your domain’s specialized knowledge, or real-time user context specific to your app. If the AI feature does not require data that only you have, the user can get equivalent value from a free public tool. Second, the problem the AI solves must require handling unstructured inputs, variable contexts, or adaptive outputs in ways that rule-based logic cannot manage cost-effectively. If a decision tree or a well-written algorithm would produce the same outcome, it is cheaper, more predictable, and easier to maintain. Third, the AI’s output must demonstrably outperform the best available non-AI alternative on the metric that matters most for that feature in your specific product context.

A fitness app using AI to generate training plans based on the user’s recovery status, historical performance, and injury history can produce recommendations no general-purpose AI assistant could match, because it has proprietary data about the user’s specific athletic profile. That feature meets all three conditions. A fitness app using AI to generate motivational notifications produces outputs no different from what ChatGPT could generate given the same prompt, operating on data the user could describe themselves. That feature meets none of them.

How should mobile teams evaluate whether they have enough data for an AI feature?

Data sufficiency for an AI feature has four dimensions, each of which must be evaluated before architectural commitments. Volume covers whether you have enough training examples or interaction history to support the feature’s intended function. A recommendation engine requires substantially different data volumes depending on whether it is collaborative filtering, content-based filtering, or hybrid. Quality covers whether the data is accurate, consistently labeled, and free of systematic bias that would cause the AI to replicate historical errors. Freshness covers whether the data reflects current user behavior or whether it represents historical patterns that have since changed. Structure covers whether the data is in a format the AI can use or whether it requires substantial transformation and preprocessing before it can train or contextualize a model.

The most common data failure mode is discovering after committing to an AI architecture that the data required for quality outputs either does not exist in sufficient volume or exists in a format that requires three months of data engineering to normalize. According to Gartner’s data quality analysis, organizations that skip the data audit step spend an average of 2.8 times more in remediation costs later than those that complete a data readiness assessment before beginning AI development.

What is the real total cost of an AI feature in a mobile app?

The published price for AI model inference represents the starting point, not the total. A complete cost model for an AI feature in a mobile app includes model inference (the base cost of processing each user query), retrieval infrastructure (if the AI needs to access proprietary data not in the model, vector database storage and retrieval adds 30 to 60% to base inference costs), retry and error handling (production AI features typically generate retry overhead of 15 to 25% above base inference volume), content moderation and output validation (required for any user-facing AI output in regulated categories or where factual accuracy matters), observability and monitoring (typically 15 to 25% of total operating cost), and periodic model updates and retraining as user behavior and data distribution change.

The architecture decision with the greatest impact on total cost is the split between on-device and cloud inference. On-device models running through Apple Core ML or Google ML Kit eliminate server costs entirely for lightweight inference tasks, improve privacy, and reduce latency to under 100 milliseconds. Cloud inference is necessary for tasks requiring complex reasoning or access to proprietary knowledge bases but carries ongoing costs that scale with engagement. Most production mobile AI architectures in 2026 use both: on-device for fast, frequent, privacy-sensitive operations and cloud for heavy reasoning when latency of one to three seconds is acceptable.

How does EU AI Act enforcement in 2026 affect AI features in mobile apps?

The EU AI Act entered full enforcement in August 2026, establishing specific obligations for mobile apps serving users in EU markets. The primary compliance dimensions for mobile AI features are risk classification, transparency, and human oversight. Apps using AI to make or influence decisions in high-risk categories (health, finance, education, employment, legal) must conduct conformity assessments, implement human oversight mechanisms, and maintain documentation demonstrating compliance. All AI-generated content must be labeled as such, a requirement that applies to any AI feature producing text, images, or recommendations visible to users.

For apps outside the high-risk categories, the practical compliance requirements are transparency documentation (what data the AI uses, how users can access or delete it) and prohibition compliance (no AI systems exploiting psychological vulnerabilities to manipulate user behavior). Teams building mobile apps with AI features for EU markets need legal review of their feature scope against the EU AI Act’s risk classification criteria before development begins, not before submission to app stores.

What data privacy requirements apply specifically to AI features in mobile apps?

AI features in mobile apps collect, process, and sometimes store richer user data than conventional app features. The privacy obligations attached to that data are correspondingly more demanding. Under GDPR, any personal data used to train or contextualize an AI model must have a lawful basis for processing (typically consent or legitimate interest), and users have the right to access, correct, and delete that data. For AI features, the right to explanation also applies in high-stakes decision contexts: users have the right to understand why an AI system made a specific decision affecting them.

Beyond GDPR, CCPA obligations apply for California users, PDPB requirements apply for Indian users, and sector-specific regulations apply in healthcare (HIPAA), finance (SEC AI guidance, FINRA), and others. The practical implication for mobile development teams is that privacy architecture for AI features must be designed before data collection begins, not reviewed before launch. Retroactive privacy architecture is not only more expensive than proactive design but also more likely to require feature redesign that disrupts the user experience already in production.

What is the “experience expectations gap” and how do development teams avoid it?

The experience expectations gap is the distance between what a user expects an AI feature to do and what it actually delivers. Users form expectations about AI features from two sources: general AI experiences they have already had (ChatGPT, Gemini, Siri), and the way the feature is described or presented in the product. If either source sets expectations higher than the feature can reliably meet, the gap produces distrust, disengagement, and eventual abandonment.

Teams avoid this gap through two mechanisms. First, honest product presentation: describe what the AI feature does in terms of specific, verifiable outcomes rather than broad capability claims. “Our AI recommends workouts based on your last 30 days of training data” sets accurate expectations. “Our AI knows your body” does not. Second, graceful degradation design: every AI feature should have a clear failure mode that maintains user trust when the AI produces a suboptimal output. That means showing users what the AI is working from, giving them an easy override, and actively soliciting feedback when outputs are wrong. Trust is built through consistent behavior and repaired through transparent acknowledgment of failure. Features that hide their failure modes lose user trust permanently when the inevitable imperfect output occurs.

How should mobile app teams measure whether an AI feature is working?

Measurement for AI features in mobile apps operates at three levels, and all three must be tracked before an honest assessment of feature performance is possible. The first level is direct user value metrics: is the AI feature saving users measurable time, effort, or decision friction compared to how they performed the same task before the feature existed? If the feature cannot demonstrate improvement against a specific pre-feature baseline, it is not working. The second level is business impact metrics: is the feature moving the business metrics that determine the app’s long-term viability? Day 7 and Day 30 retention, revenue per active user, and feature-driven conversion rates are the metrics that connect AI feature performance to business outcomes. If the AI feature does not affect any of these within two quarters of launch, it warrants a fundamental reassessment. The third level is AI-specific quality metrics: are the outputs factually accurate, grounded in the data provided, comprehensive enough to be useful, and relevant to the user’s actual context? These four metrics (truthfulness, groundedness, coverage, relevance) determine whether the AI is functioning as designed. Business metrics tell you whether the feature is working for the product. AI quality metrics tell you whether the AI is working as built.

What should be in place before an AI feature launches in a mobile app?

The pre-launch checklist for an AI feature in a production mobile app covers five areas. User testing with a sample reflecting the diversity of the actual user base, not internal testers who understand the feature’s scope, should validate that the feature produces useful outputs for real users across the range of inputs they actually provide. Load and reliability testing at production scale, not just anticipated scale, should confirm the feature handles peak usage without performance degradation that erodes user trust. The cost model at peak usage should be reviewed and accepted before launch, because discovering post-launch that the feature’s economics do not work requires either a disruptive architecture change or a decision to remove a feature users have already been given. Trust and transparency design should be implemented and user-tested: users should know when AI is producing outputs, understand what it is based on, and have a clear path to override or report incorrect results. Finally, for any AI feature in a regulated category or operating on personal data, legal and compliance review should be complete, not scheduled.

How is PrimeFirms different from other mobile app development companies in how it builds AI features?

Most mobile app development companies treat AI features as engineering deliverables: a specification arrives, the team builds to that specification, and the feature ships. The specification’s quality, the underlying problem validation, and the business case sustainability determine outcomes that most development partners are not accountable for.

PrimeFirms structures AI feature engagements around accountability for the outcome rather than accountability for the delivery. That means our engagement process includes problem validation before architecture decisions, data readiness assessment before infrastructure commitments, and cost modeling at scale before development begins. It also means we build the observability infrastructure required to measure whether the feature is actually working after launch, not just whether it passes acceptance criteria.

For regulated industries including healthcare, fintech, and insurance, our team brings specific experience with EU AI Act compliance requirements, HIPAA data architecture for AI systems, and audit logging infrastructure designed for regulatory review rather than internal monitoring. For mobile product teams who have experienced the pattern of AI features that passed their internal review but did not survive contact with real users at scale, a conversation with our team about what that process missed and what the rebuild should address is where we typically start.

The mobile app market in 2026 is not short of AI capability. It is short of teams willing to do the work that validates whether that capability is solving a problem worth solving, building for the users who will actually interact with it, and sustaining an economics model that holds at production scale.

The teams that do that work are the ones whose AI features appear in the Day 30 retention data rather than the quarterly feature retrospective on why something did not land. The difference between those two outcomes is not a better model. It is a better process for deciding what to build and verifying that it works before it scales.

If your mobile product roadmap includes AI features and you are not certain the problem validation, data readiness, and cost modeling have been done at the level described in this guide, that uncertainty is worth resolving before the next sprint starts.

About PrimeFirms PrimeFirms is a mobile app development company specializing in AI-powered product engineering for mid-market and enterprise clients. Our work spans iOS, Android, and cross-platform development with particular depth in AI feature architecture, regulated industry compliance, and production observability for AI systems.